Junior Research Group | MoveGroup

Integration & Analysis of Multimodal Sensor Signals and Clinical Data for Diagnostics and Investigation of Neurological Movement Disorders

"Differentiating motor, cognitive, and sensory abilities is of great importance in assessing functionality in the elderly. Thus, causalities between cognitive and motor deficits can be identified, enabling specific, resource-oriented therapeutic approaches."

Dr. Sebastian Fudickar

Head of the Junior Research Group MoveGroup

About the Project

The differentiated consideration of motor, cognitive and sensory abilities supports a refined specific, resource-oriented therapy and enables research to gain a more sophisticated understanding of the causalities and interactions between cognitive and motor deficits (which burden patients with Tourette's, Parkinson's or dementia, among others).

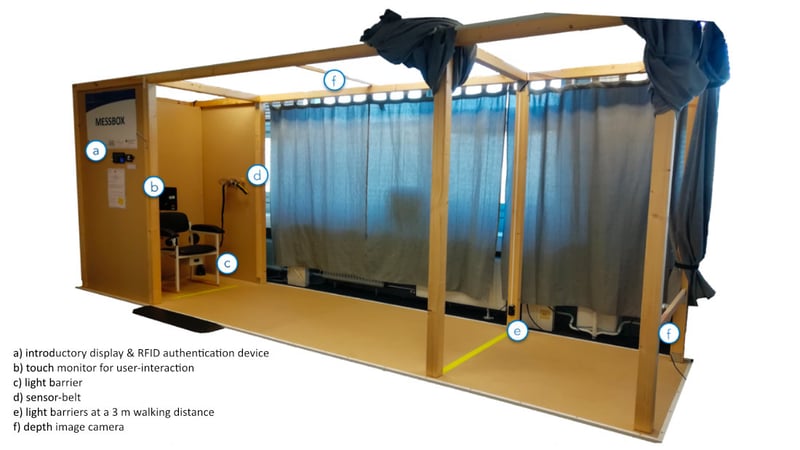

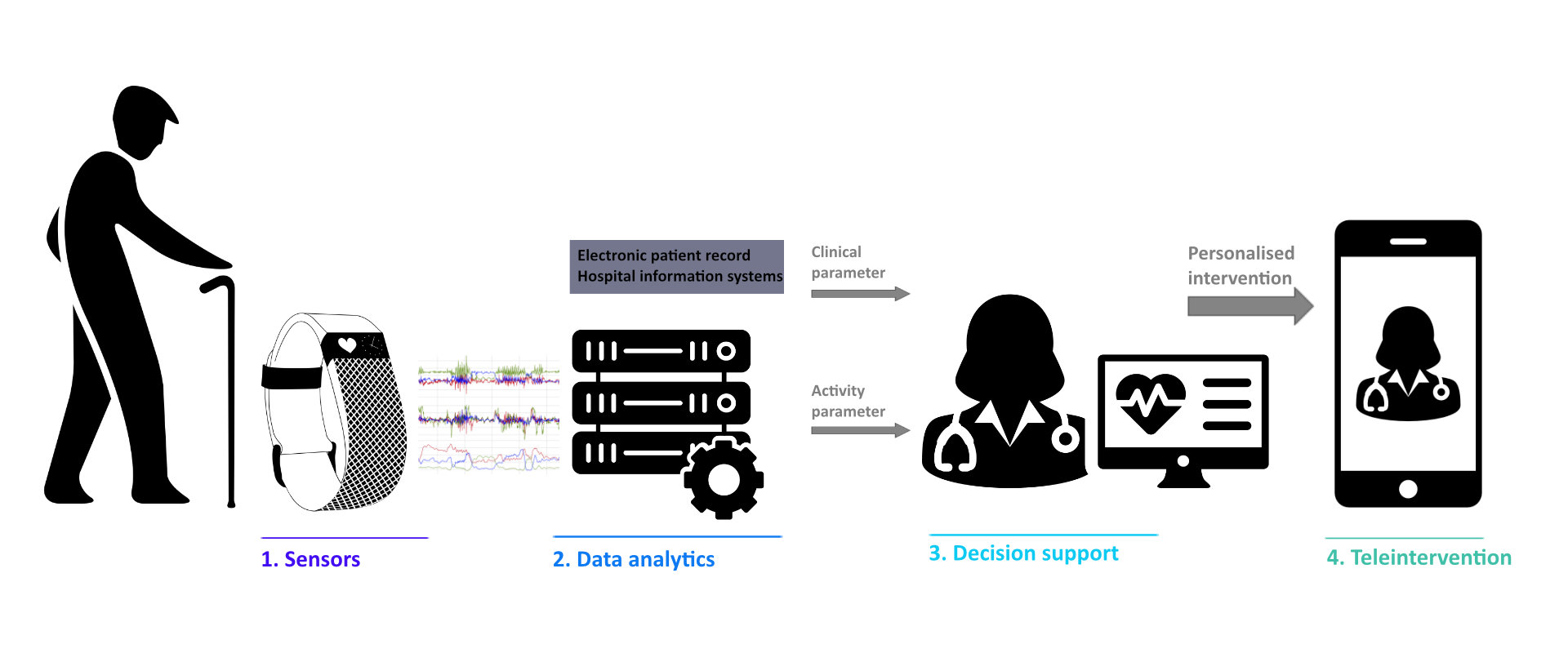

The junior research group (JRG) MoveGroup, funded as part of the HiGHmed Consortium, is designing and evaluating a multimodal sensing platform to collect appropriate monitoring data to infer these motor, cognitive, and sensory abilities. Identification and fusion algorithms for multimodal measurement of functional abilities using near-body and ambient sensing for improved understanding of normal aging or abnormal individual trajectories will be prototyped and evaluated with relevant cohorts.

The future utilization of corresponding data in clinical documentation, diagnosis and therapeutical processes requires a successful data integration and data harmonization via agreed structural standards (e.g. openEHR, HL7 FHIR) and semantic standards (e.g. SNOMED CT, WHO ICF). To develop and use relevant sensor-based movement models and profiles for care and research processes, the JRG aims to specify and prototype appropriate models and profiles. Depending on the research question or disease pattern, further required subject or patient data will be integrated, in each case in compliance with data protection and ethical regulations.

Based on the sensor platform and the data integration, an AI-based decision support for the medical care of patients with movement disorders will be implemented and evaluated. Using machine learning models, a decision support system for treatment planning and an event monitoring system with alarm function will be prototypically implemented in the care context on the basis of the analyzed multimodal movement data and clinical data.

Goals

The JRG designs, implements, and evaluates new methods of integrating and analyzing multimodal sensor signals and clinical data for the diagnosis and study of movement disorders. In doing so, the scientific goals and research activities of the project are structured along the following three main goals:

Goal 1: Sensor-based acquisition, modeling of body movements

By building a multimodal sensor platform for detailed acquisition of body movements and developing an algorithmic processing chain for sensor data fusion and feature extraction, a precise, quantitative analysis of body movements will be enabled.

Goal 2: HiGHmed-compliant data integration and exploitation

To integrate and exploit sensor-based motion models and profiles, clinical data including additively collected multimodal sensor data will be processed in compliance with data protection and ethical regulations. For this purpose, the IT interfaces and functions of the Medical Data Integration Center at the University Medical Center Schleswig-Holstein (UKSH MeDIC) are used for relevant care and research processes.

Goal 3: Decision support and knowledge gain with AI methods

For the development of an AI-based decision support for the medical care of patients with movement disorders, machine learning models will be developed based on the collected multimodal movement data and integrated into a corresponding event monitoring system.

Contact us!

For successful implementation and validation, close cooperation with clinical partners is sought. If you are interested in such a cooperation, please contact us.

Further information

Head of the Junior Research Group MoveGroup

Dr. Sebastian Fudickar

Head of Junior Research Group MoveGroup | University of Lübeck | Institute of Medical Informatics